Google is turning Android from a phone operating system into something closer to a personal assistant that acts on your behalf. At its Android Show event today, the company announced Gemini intelligence, a set of AI features rolling out to select Android devices later this summer that can take over routine tasks entirely, as long as you tell it to.

The centerpiece is task automation. Ask Gemini to grab a last-minute spot in a fitness class, find standing-room tickets for a show, or order dinner through DoorDash on your way home from a hike, and it will open the relevant app, navigate to what you need, and complete the transaction. You confirm with a single tap at the end. If you see a flyer or brochure for something you're interested in, you can take a photo of it, ask Gemini to find a similar option on Expedia with good ratings and availability, and it starts working in the background while you do something else.

Also new: Chrome auto browse, arriving in late June, extends this to the web. Gemini inside Chrome on Android will handle tasks like scheduling appointments, tracking down out-of-stock items, and party planning, without you having to direct every click.

For anyone who has ever lost 10 minutes filling out a parking app form because they couldn't remember their license plate number, the intelligent autofill update will be welcome. Instead of jumping between apps to find your passport number, driver's license, or rental car plate, Gemini fills in all of it for you automatically, across Android apps and Chrome, with a single tap.

There's also Rambler, a new feature built directly into Gboard, Android's default keyboard. Instead of word-for-word voice dictation, you speak naturally, including backtracking, filler words, and changed minds, and Gemini converts it into clean, readable text. It works across any app, not just Google's own, and supports switching between multiple languages in the same message.

The part Google isn't advertising quite as loudly

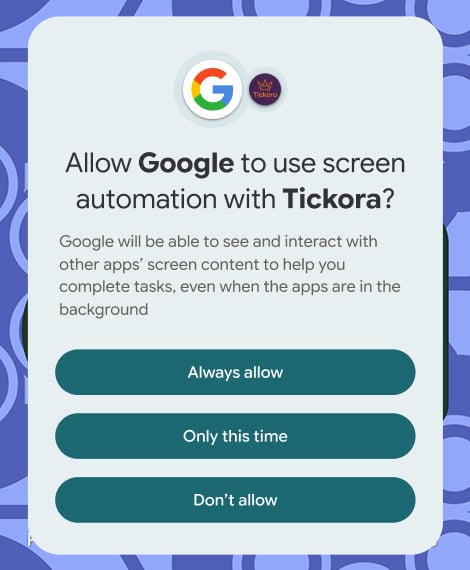

Task automation only activates when you explicitly ask it to, and it works only within apps you've approved. It stops when the task is complete. Google is clearly trying to get ahead of the obvious concern: that AI acting autonomously inside your apps is a significant thing to hand over.

Those are reasonable guardrails, but they raise real questions about what "approved" means in practice, and how granular that control actually is. Google didn't specify during the briefing. The on-device vs. cloud question also got a carefully worded non-answer: features will use "whichever combination of technologies delivers the highest quality experience that is private and secure." Translation: some of this processing happens on your phone, some of it happens on Google's servers, and it will vary by feature.

The bigger caveat is timing. Gemini intelligence launches first on Samsung Galaxy and Google Pixel devices "later this summer," with additional devices following in waves throughout the year. If you're not on a flagship device, you may be waiting a while for most of these features, and Google was deliberately vague about which features reach which devices on what timeline.

The features themselves are useful in concept: Filling out forms, booking routine services, and cleaning up voice dictation are things people actually struggle with on mobile. Whether the execution matches the demo-day promise is something we'll be watching closely when these features reach our hands.

Read next: Googlebook is Google's answer to the MacBook. Here's what we know

[Image credit: screenshots via Google/Techlicious, phone mockup via Canva]